1.

It is Christmas and everyone at the Preservation Pub is beyond redemption. I am seeing double, not thinking clearly. I have driven eight hours from Florida up the age-worn spine of the Appalachians to be here tonight. On the stage, a ragtag crew of backwoodsmen, mechanics, and beer drinkers are teetering on the brink of chaos as they work their way through an energetic set somewhere in the feverish frontier between blues, funk, and rock. There are seven of them in all: big, working-class men like miners in the old Pennsylvania polka bands of the last century. They look like they would be more comfortable twisting wrenches than playing for us here tonight, but they’ve chosen instead to give us this Christmas offering. I am grateful for it. It may have something to do with the drinks, but we are all grateful.

There is a guy in a fedora playing a baritone saxophone, probably the first person to take the hat or the instrument seriously in a room like this since the New Wave era. There is a big guy working a trombone and leading ring shouts into a microphone above his head. “Roll on!” he shouts, hoarse from exertion. “Roll on!” There are two guitarists hiding near the back of the stage. I think they might have long hair, but it’s a long way away and the whiskey is starting to take hold. I can see the bassist, however, well enough to tell that he is the straight man in this ensemble, competent but somewhat out of place. He wears a Fender t-shirt and I joke with my wife about “wearing the shirt of the band to the band.” In my alcoholic, road-weary haze, this observation is impossibly funny. The drummer is invisible behind the mass.

There is the leader, finally, seated at a little Wurlitzer stage left. He is Jon ‘Cornbred’ Worley, a musician of either renown or infamy, depending on whom you ask, and he has the room spinning even more erratically than the spirits flowing from the bar.

“A long time ago, in a galaxy far away,” Worley drawls, his voice dry and raspy, like the Marlboro Man in an iron lung, “the little Baby Jesus was born in a manger.” We follow along, unable in our state to give his words much thought. “He had so much love for y’all,” he continues, “so much love even in his little baby toe. But here y’all are, heathens on Christmas.” Worley is right. Here we are, heathens together.

It is Christmas night, 2021, and no one is wearing a mask. No one is “practicing social distancing,” a phrase from last year that already feels out of date and awkward, like “riding the information superhighway.” There are no bottles of hand sanitizer on the bar, no plastic screens separating the booths. No one tried to limit the number of people here tonight, and there is little room left for anyone else to come in.

Somewhere in the world, perhaps already in this room, there is a new variant of the coronavirus rampant. The new variant spreads prodigiously. Experts warn it may bypass the borrowed immunity of vaccinated people, potentially resetting the clock to the spring of 2020. These experts have named the new variant Omicron, from the Greek letter. It is the first time many people have heard or seen the word. The assignment of a new Greek letter imparts distance, for the time being. We will deal with Omicron when it arrives. For now, though, here we are, heathens at the Preservation Pub.

2.

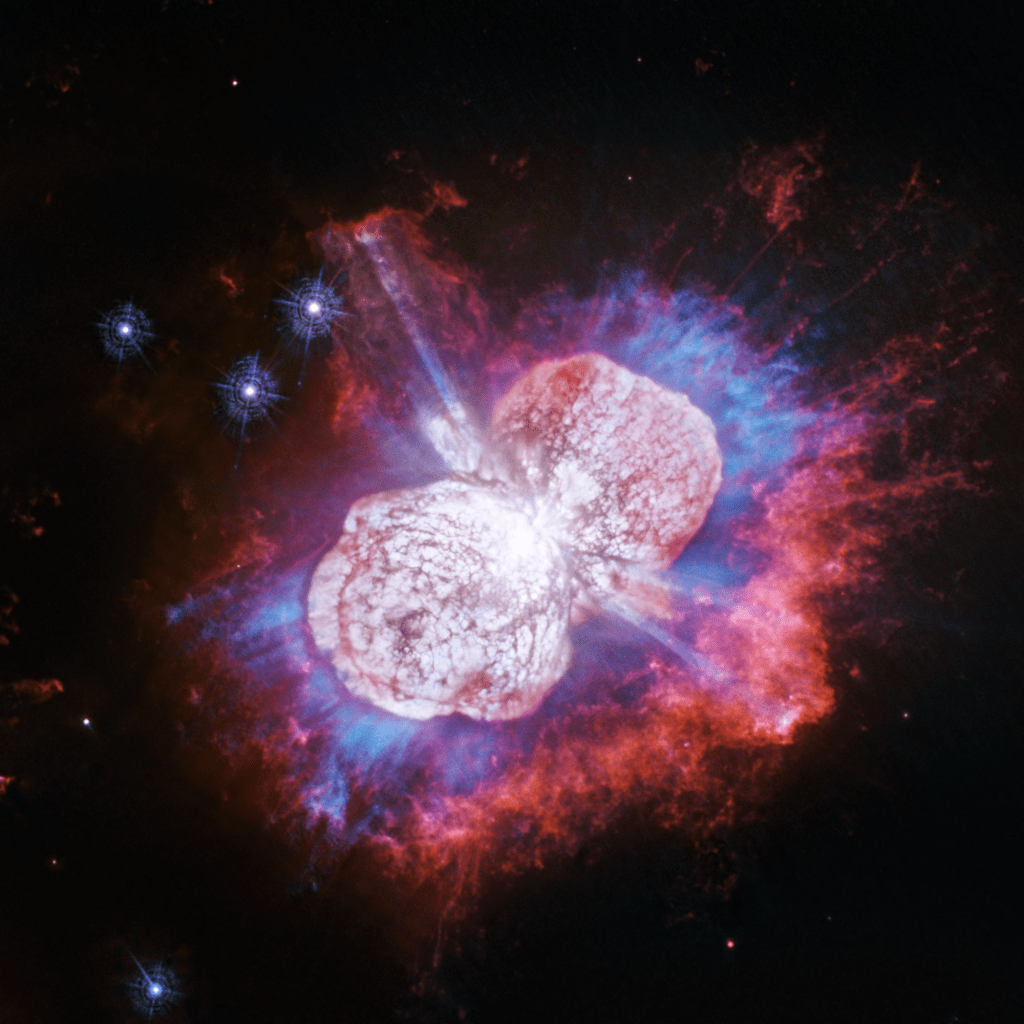

Naming and renaming, drinking and performing: these are acts of remembering and forgetting. How things are remembered and why they are forgotten give shape to a community. They also hold the key to deconstruct, to understand, and learn what it feels like to live in a community. Some seek memory in oral history, others turn to the archives. I had a different objective. Over the next few days in Knoxville, I would strive to uncover a counter-archives of sound: to explain what the city remembers and how it forgets, primarily by listening. I came to the city with a strange hypothesis: given that cacophony, music, and silence exist on a spectrum, like a scientist analyzing the spectrum of some distant exoplanet to infer whether it hosts life, I could read the spectrum of sound in Knoxville to hear what it might teach me about life there.

Close listening revealed a city strapped into a scorched earth drag race of growth and development. The process is nothing new. Like the coronavirus variant rapidly mutating and spreading among the euphoric crowd at the Preservation Pub, Knoxville and the Appalachian region is constantly evolving. In 1844, a traveler called the town a “poor, neglected looking place.” In 1857, another visitor said that he was “struck with the thriving looks” of the area, shaped by “a stalwart, laborious, independent population; the pith of the United States.” Later in the nineteenth century, according to historian James Wheeler, widespread coal use “gave the city a distinctly grimy, sooty appearance” and “street paving was spotty at best.” Mountains, rivers, railroads, turnpikes, pipes, and wires continue to shape and reshape the city.

These changes have a sound. Knoxville’s most illustrious son, writer James Agee, was acutely aware of this fact. His masterwork, A Death in the Family, opens with a prolonged meditation on the city’s suburban soundscape circa 1915. Read the prologue again and the sound is unmistakable, paragraph after paragraph of water hoses sputtering with streams of water, “mothers hushing their children,” cicadas and crickets singing in the trees “like the noises of the sea and of the blood her precocious grandchild.” After this resonant opening, death comes for the titular family against a backdrop of powerful quietude. Approach Knoxville, Agee insists beautifully, with open ears.

I found an equally rich soundscape, but pitched to a different register now that the city has outgrown the sleepy railroad town of more than a century ago. From the stage-managed city center to the suburban frontier stampeding across the ancient, rolling mountains surrounding it, Knoxville is transforming itself this time around into a cookie-cutter copy of every other American city. I came looking for something distinctive. What I found was what I left behind, a sort of Appalachian Orlando.

Travel writing, I’m told, should pull out the exciting differences in a place, so readers can escape their humdrum reality and dream of faraway lands. If you look—and listen—closely to Knoxville, you will find unique and beautiful things. You’re not likely to find Cornbred Worley on Christmas night in too many American cities, after all. You can climb giant stone steps up the side of a mountain in Knoxville. You can take classes at a world-class university. You can check out the site of a World’s Fair. You can swim in mountain streams up there, you can drink great local beer, or you can visit amazing used bookstores if that’s your thing. You can play music, make art, make love. I implore you to go.

Travel should also force us to confront some hard facts, though, and that is what I’d like to explore in this essay. This is where we all live out here in America: a creeping sprawl of lookalike subdivisions, connected to one another and the world beyond by an overbuilt but rapidly deteriorating network of roads, which lead along the way to taste-alike restaurants and shop-alike strip malls. Together, all of this robs us of our wealth, health, and well-being. Sometimes you have to hit the road to see what’s right in front of your face.

The flattening and spreading of American cities has been moving at breakneck pace for fifty years, but listening to Knoxville now reveals an increasingly urgent dissonance underscoring the machine of growth. This dissonance feels new. Sprawl seems to have shifted from a major to a minor key, as though the party ought to have been over ten or twenty years ago but no one knows what to do.

3.

I carried a deeper purpose on this trip, somewhat closer to my chest. I did not choose Knoxville at random. My family decided to move there from Florida, out of the blue, earlier this year. Around the first of February, my mom called and told me that an internet real estate company had made an unbelievable offer on their house—the kind of offer only an out-of-control algorithm juiced by an infinite money cheat code could make. Thinking they would be foolish to turn such an offer down, my Jacksonville family was packing their belongings, selling as much as they could, and painting the walls of the house where I had spent my most formative years to prepare them for a walkthrough with the internet broker on Zoom.

“Better get out of there before they come to their senses,” I said. And then, more seriously, “Where are you going?”

“We’re looking up in the mountains,” mom said, “around Gatlinburg.”

My Florida family is not alone in its lust for the mountains. Nearly everyone I know—attracted primarily, it seems, by breweries and hiking trails—has considered moving to the lush, green region of mountains and rivers bounded by Asheville, North Carolina in the east and Gatlinburg, Tennessee in the west. If they haven’t considered it themselves, they know someone else who has already relocated there. For those of us living in the wake of prior booms, the present rhymes with the past. Speaking at a similar moment of explosive growth here in my home state, William Jennings Bryan remarked that Miami in the 1920s, for example, was the only city in the world where “you can tell a lie at breakfast that will come true by evening.” Bursting with an influx of middle-class families desperate to escape the flat, hot sunbelt, Appalachia is the New Florida. Fairy tales told at breakfast are awash in craft IPAs down at the dog park by sundown.

On Valentine’s Day, we drove to Jacksonville and I spent the last night in my childhood bedroom. I longed to take it all in, savoring the friendly darkness and familiar quiet until the dull light of dawn streamed through the blue miniblinds, and then it was time to go.

Later, back at home, I mourned the old house by looking at pictures on Google Street View. There was my mother’s car. There were the bushes I had trimmed every summer. There was the concrete we poured for a basketball hoop, scratching “1996” on the surface with a stick to commemorate the year. There was the bedroom window behind which I imagined a thousand futures. I hadn’t lived there since 2005, but I couldn’t imagine never crossing the threshold again. Even now, when I think of my mother, I think of her in the house there.

I drove home with a box full of old keepsakes and report cards which sat in the trunk of the car until St. Patricks Day. When I was done looking at my house on Street View I looked at pictures of Knoxville. I imagined what it would be like to live there. I had to admit, those Smoky Mountain trails looked pretty good. And I had heard good things about the craft breweries.

4.

To hear a place, to know it, you must take part. On Christmas Day we packed up the dog and set out from my wife’s childhood home, still intact and inhabited, and made our way up the long axis of Alabama to Tennessee. Andalusia, Greenville, Montgomery, Birmingham, Gadsden, Fort Payne, and dozens of other places existed somewhere in brilliant sunlight beyond the verge of the highway. We stopped in Chattanooga as dusk fell to night and ate sandwiches from a gas station somewhere below the roaring interstate up on a hill over our heads.

Ninety minutes after the sandwiches in Chattanooga we were in a townhouse off Kingston Pike having Christmas dinner with mom. The rest of the family had already eaten, but we caught up with one another over the dining table next to the couch anyway, paying little mind to the tinkling bells and Christmas carols playing on commercials in the living room behind us. The road was still with us, however, and dinner felt rushed, cramped and unreal like a jet lag meal at the airport. It was still early when we left, begging exhaustion, and made our way down Interstate 40 to a hotel across town.

There are two rivers in Knoxville, both heavily engineered. First there is the true waterway, the Tennessee River. Named for a Cherokee town that once stood on its banks, the river is more famously known now for its place in the Great Depression. Downtown still there is a building bearing the logo of that great New Deal creation, the Tennessee Valley Authority. It was the TVA that brought electricity to the Appalachians, harnessing the power of the flowing river to light up the region and creating, along the way, a series of wide lakes that draw the ardor of bass fisherman and the ire of conservationists. The old Cherokee town of Tanasi that gave the river its name lies now beneath one of those lakes just a few minutes down Interstate 40.

Next there is the great steel and concrete river and its branching tributaries, Interstates 40, 640, 275, and so on, which divide the city into rough quadrants. These roads are unavoidable, ever-present. Odds are, this is how you will arrive here: veering off the interstate after an interminably long drive, nervously attentive to the voice of your digital assistant, Google or Siri or Garmin or whatever, as they instruct you to turn onto one of the city’s arterial roads. From the south, coming up from Atlanta or Birmingham, you will have trooped over a hundred miles or so of pockmarked and grooved highway spanning the sparsely populated region between Chattanooga and Knoxville. From the east, you will have come down through the mountains, clutching the steering wheel and sweating as you roll down the long, curving grade toward the Tennessee River valley. You turn down the podcast that has carried you across the long, desolate road, and pay attention.

For the next few days, everywhere we went, we reckoned with the road. Later that night, long after Cornbred Worley packed up his Wurlitzer, I could hear the concrete river outside the hotel flowing still, flowing through the night.

5.

We decamped for the week at a Country Inn & Suites two turns off the highway offramp. It stood next to a retail sign shop on one side and a claustrophobic cluster of doctor’s offices on the other side, all brick and white trim, out of place in their business-like propriety next to the motel. There was a liquor store across the street, a Weigel’s gas station at the end of a long drive. Ragged men with suitcases or bicycles haunted the liminal space between the liquor store and the gas station, waiting like passengers at the bus terminal for good fortune to shine on them. At night, when the road slowed to a sort of pianissimo hiss, we could hear their voices in the chill air.

Listen to a place like this. The Country Inn & Suites is a four-story pile of stucco and wood, beige with rust-colored highlights, virtually identical with the thousands of other stick-built suburban establishments that have taken the place of the old business districts in the United States. Derided by the country’s trendsetters and mostly ignored by its elites, these hotels, strip malls, fast casual restaurants, and gas stations make up the very fabric of existence for most Americans. These places resonate with their voices as a result.

Take Ethan, for example. When we arrived, Ethan was working behind the counter. Tall and lean, dark haired beneath a Nike cap, Ethan was dressed in a T-shirt and basketball shorts, as though he were checking us in to the hotel from his own living room. In many ways, he was. We would come to know Ethan well over the coming days, because he was always there.

Entirely professional at check-in, Ethan let his hair down on the bench out in front of the hotel. We ran into him on the way out to Preservation Pub and stopped for a chat. Between long drags from an American Spirit cigarette and trips back inside to help customers, he explained that he lived in the hotel.

“I’ve worked every day for the past 22 days,” he said, exhaling a cloud of smoke toward the parking lot beside us. “I’m thankful to the owner, don’t get me wrong,” he continued. “He took me in when I was in a real bad place, but I desperately need a day off.”

He inhaled again, continued with a smoky laugh. “Today I got to drive to Office Depot to pick up an ink cartridge and it was like the best thirty minutes I’ve had in weeks.” He stubbed out the cigarette and looked at us with a nervous grin.

“Anyway, I’ll be here if you need anything.”

That conversation with Ethan haunted the rest of the night. Shielded from the noise of the road, the front desk at the Country Inn was an envelope of quiet in which he was captive, like a rabbit in a snare. At the Preservation Pub, I saw the pub wrapped loosely around the neck of the bartender. I saw it closing in on the waiter counting tips at the dim and silent counter of the Italian restaurant next door. I saw it wrapped around gas station clerks watching the long hours flow like glaciers in the night. Truck drivers, lashed to the steering wheel, the droning voices of podcast hosts and talk radio personalities pouring into their ears to mask the endless audio blur of the road. Blasting horns, slinging guitars, and jamming chords on the keyboard offer a sort of freedom from the envelope of quiet captivity that ensnares us, but this freedom is fleeting.

6.

The next day, head pounding like that massive bass drum the marching band rolls onto the football field at homecoming, I woke up from a terrible dream. In the dream, I was driving through the raging storm, high up in the mountains. Wind and rain lashed the car, as lightning cracked and sizzled, dancing among the rocky crags overhead. It was a struggle to keep the car on the rain-slicked road as I negotiated the steep grades and twisting switchbacks. Higher I drove, higher and higher, as the mountains appeared and disappeared around and above me with the blinding intervals of popping electricity and primeval darkness. After a few moments of this frenetic driving horror, I noticed that the mountains were growing larger, craggier, newer. The rolling green hills of the Appalachian gave way to the dramatic stone peaks of the Rockies, Andes, Himalayas, turning back the clock millions of years with each blinding flash. Larger and larger they loomed, until I was scaling a mountain road at 20,000 feet, up where airliners cruise. Struggling for breath and freezing, I strained to see the road up ahead, but there was no more road. The car barreled forward, toward the sheer cliff edge, faster and faster….

I awoke with a start. Winter sunlight peeked through the cracks in the canvas drapery across the room. My wife was still asleep in the bed next to me. Rattled by the alpine nightmare, I rolled out of bed and started the day.

I waved weakly at Ethan on my way toward the sliding glass doors to locate a strong cup of coffee. Ethan, either still on duty from the night before or back at the desk after a brief nap, was wearing a polo shirt and khaki shorts now instead of athletic wear. Pulling himself away from a heated conversation with a housekeeping manager in street clothes, he smiled like we were old friends and told me there was a Starbucks down at the end of the drive, across the street from Weigel’s. I thanked him for reading my mind and shuffled out into the overcast late morning.

Sometime after the coffee started working its magic, I began to hear in the rhythms of the coffee shop the ways that growth in Knoxville is connected to growth everywhere. I couldn’t put my finger on it at first, but I realized gradually that the sound I was hearing was a sort of capsular din: the dissonant collisions of music from dozens of speakers, the insistent hissing of car engines, and the whine of computer fans—the background noise of twenty-first century America.

The Belgian philosopher Lieven de Cauter offers an analogy to understand this sound. He argues that we live in a “capsular” society. We drive around in cars with the windows tightly closed, enveloped in the warm embrace of our own music or podcasts or whatever, largely oblivious to the experience unfolding on the other side of the windshield. We make our way to and from enclosed, self-contained, climate-controlled places—remarkably similar, the philosopher points out, to space capsules. When we arrive, we often isolate ourselves in headphones, or spend our time staring at phone screens, impervious to the attempts of strangers to catch our attention. From moment to moment, place to place, we inhabit capsules, until sleep carries us off to the total enclosure of dreams.

Knoxville, like the rest of the country, is becoming a capsular city. Nowhere is this more evident than Starbucks. Thinking about Lieven de Cauter’s capsular society while sipping my coffee–I had just read the book a week before leaving Florida–I thought about how the things we consume are shrink-wrapped like the places we inhabit: hermetically sealed, individualized. Before reaching us, they travel like packets of information on a digital network, grouped in Box Number X of Y Boxes, described on a manifest, containerized, palletized.

Anxious for something to ease the anxiety of this realization, I pulled out a notebook and started writing. Here are my notes:

A long line of Honda CRVs, Ford Explorers, Chevy Colorados, and Toyota Camrys wraps around the little cafe like a rumbling, mechanical boa constrictor. A delivery truck idles at the back of the parking lot while the driver rolls pallets loaded with cardboard boxes of paper cups and cellophane-wrapped bagels and cake pops and bulk boxes of sweetener packets into the café through the spacious rear door. Except for the people placing orders and the people picking them up a little further down the line, every car is sealed tight.

Go to any Starbucks in the country at 11:00 AM and you are likely to see the same cars, hear the same sounds, and smell the same fumes.

Inside, the sound of the road and parking lot is replaced by music. “Heat Waves” by Glass Animals plays over the speakers in the café, a little louder than comfortable. Behind the counter, one of the crew asks another, “Are we out of caramel syrup? We’re out of everything.” The smell is familiar: coffee, of course, but something else, too, lingering in the aroma of dairy and burnt bread. The air is neither hot nor cold, but perfectly air-conditioned. “Headspin” by Hi Frisco plays as I work through the queue and a barista coos at a Jack Russell Terrier in the drive-thru lane. I order a Pike Place Roast, grande, with cream and Splenda. The Jack Russell rides away in a white Ford Ranger with an orange T on the rear window. It is the kind of middle-American truck you would have been surprised to see at Starbucks Coffee twenty years ago. Now there are more pickup trucks than sedans in the drive-thru. I move down the line.

“I’m going to sleep well tonight,” an employee shouts over the music as she clocks out to leave. “I want to take a few Nyquil and just curl up.” A Ford Focus exits the drive-thru and a Nissan Rogue takes its place at the window.

By the time I receive my coffee, the music has shifted to show tunes. Judy Garland sings “Somewhere Over the Rainbow” while I tear open my three little packets of Splenda and swirl the elixir with a little wooden stick. Pallets from the truck outside crowd the back hallway. Taking a seat, sipping the coffee I’ve had a hundred times before in cafes and break rooms, I close my eyes and try to discern what is distinctly Knoxville in the coffee shop. “Under the Sea” from The Little Mermaid takes over for Judy Garland and I am at a loss. I finish my coffee and toss the cup in the trash with the discarded little sugar packets and sticks and napkins. The sound of the road pours in through the open door on my way out.

In the drive-thru line outside, I see a grad student sitting in the driver’s seat of a Honda CR-V reading David Lack’s Darwin’s Finches. We look at each other for a moment through the barrier of glass and steel and plastic. I nod and he looks back down at his book.

I cross the busy street, back to the Country Inn & Suites.

7.

Relieved of the throbbing headache by coffee and a cellophane-wrapped croissant, I returned from Starbucks in a more positive mood. Our next stop wouldn’t help, however. It was the day after Christmas. With no friends in the city and family only newly arrived, we were left with nothing to do. We went to the mall. I kept my dark thoughts about the capsular society to myself as we merged onto the hissing interstate and made our way there.

The West Town Mall was built in 1972, the first passionate flush of American mall-building. When the mall opened on the first of August in that year, “several hundred people were ready to rush through the doors to the enclosed, air-conditioned” shopping center, a reporter wrote, following remarks by the developers and local politicians. Mayor Kyle Testerman spoke first, assuring the crowd: “It is developments like West Town, coupled with the economic confidence of the gentlemen who financed and spearheaded the development, that can launch the city into a very enviable economic period.” Inside the mall, equal in size to “17 football fields” spread (with ample parking) across 85 acres, shoppers could enjoy 75 stores nestled among “graceful plantings” and a fountain anchoring “an area where shoppers may sit and rest, if need be.” Fifty years later, the planters and fountains remain. Richard Nixon was President of the United States when the West Town Mall was opened. Jimmy Hoffa would be alive for three more years and the internet was a toy for military planners and grad students at the country’s elite universities. The West Town Mall may as well be a historic landmark.

Travel writers prefer to overlook the mall, but they should not. Whether it takes the form of a walkable shopping plaza among some old buildings downtown, an open “Town Center” concept in beige stucco, or the old multiplex buildings we grew up visiting, the mall is where city planners, developers, and entrepreneurs insist we want to go. With no other options anyway, I thought I took them up on it. Perhaps revisiting the mall with an ear attuned to the sounds of the new city would help me understand it in a new way.

It turns out the mall sounds a lot like Starbucks. It is everything and nothing, everywhere and nowhere. In the mall, you can never get lost. Not because it isn’t simultaneously sprawling and confined, dark and bright, linear and labyrinthine. It is all of those things at once. In the mall you can never get lost because you’ve always already been there. Anyone born pretty much anywhere in the world since World War II knows exactly what the mall is. For most of us in America, no matter where we are, entering the mall is like returning home.

Adrift in the sea of nowhere noise, I attempted to anchor myself with notes scrawled in my notebook.

“60 year old man,” I wrote, “selling wooden models.”

“A clockwork model of an M1 Abrams battle tank: ’That’d make a nice piece for your desk.’”

“BUD LIGHT”

“Lucio Fulci in Bath & Body Works.”

“Teenagers put YouTube on the TV by Swarovski.”

“NO SIGNAL.”

I took a seat on the frowning little couch next to the defeated TV and cracked open the brand-new copy of Knoxville, Tennessee: A Mountain City in the New South I had picked up at Barnes & Noble by the mall. William Bruce Wheeler, an historian emeritus at the nearby University of Tennessee, argues that the history of the city has been shaped by an apparent contradiction between “Mountain” tendencies and “Southern” tendencies. He concludes that the city (like any other American city, I believe) has engaged in a process of self-invention and self-delusion since the Civil War. “Progress exacted a high price,” Wheeler argues, as the people who moved from the mountain hamlets and farms surrounding the city “soon learned that urban life threatened to cut them off from the culture and institutions they valued so highly.”

Looking and listening, I saw firsthand the flattening forces that Wheeler describes. In the courtyard by Belk and Bath & Body Works, it was impossible to tell whether anyone had ever possessed a culture or an institution outside of the mall. The cavernous room burbled in a low din of murmuring voices and laughter, TikTok and YouTube videos on phones, music from the surrounding stores, rustling shopping bags, television screens, footsteps.

If anyone in the mall possessed a culture of their own, they left it behind in the cars, trucks, vans, and SUVs out in the parking lot.

On the way out, a man raced past us, tall and lean, taking long steps that accentuated his cyberpunk goth costume: black tank top, ultra-wide-legged black pants with straps, matte black combat boots. He took unnaturally long strides, straps swaying in his wake, carrying a single, four-foot-long black light tucked under his arm. He made it to the exit door a moment ahead of us and stopped, immediately, to light a cigarette in the parking lot. He sighed, relieved, and strolled slowly away.

So did we.

8.

Later that night, locked up in my hotel room but seeking some connection with all the other people in their capsules that day, I downloaded a copy of the book the graduate student in the Starbucks drive-thru was reading–Darwin’s Finches, by the British biologist David Lack.

Lying in the bed at the Country Inn & Suites, I yawned my way through Lack’s specialist discussion of ornithological details but took note of the way he attempted to navigate the countervailing traits of the finches on the islands the birds on the mainland a few hundred miles away. I turned to the soundscape for cues; Lack turned to the landscape.

“Seeing every height crowned with its crater,” he wrote, “and the boundaries of most of the lava-streams still distinct, we are led to believe that within a period geologically recent the unbroken ocean was here spread out. Hence, both in space and time, we seem to be brought somewhat near to that great fact—that mystery of mysteries—the first appearance of new beings on the earth.”

In contrast with the wonders of natural selection evident in the jagged, volcanic newness of the Galapagos Islands, at Starbucks and the mall the city’s organic soundscape felt old and barren. For some, the beige sameness of these places may be a sort of comfort, like a late-night platter of eggs and hash at Waffle House, or a reality TV show at the end of a long day. Looking around my hotel room, however, instead of comfort and warmth I felt unsettled, empty.

These were not places to listen for Knoxville.

9.

The next morning, we had an idea. If Starbucks and the mall sound like nowhere, perhaps what we needed to do was return to the city’s pubs and breweries. Boosters everywhere insist that these places practically overflow with independent local spirit. At breweries, especially, the parking lots are always full; the food trucks parked on the lawn handle interminable lines of customers; and the most successful establishments seem to colonize the city, leaving a golden wave of hops and barley in their wake as they roar from old warehouses in the center of town to recently-abandoned Applebee’s on the periphery. Ears open, then, and inspired by that first night at the Preservation Pub, I set out on a tour of the Granite City’s dive bars, breweries, cideries, and themed pubs.

Compared to the mall, these places pulse with a contemporary spirit that is difficult to pin down. After weighing a few options–”Urban Outfitted,” maybe, or “Wood Watchers”–we decided to call it Urban Upcycle.

The choices delineating Urban Upcycle are no less intentional than those shaping the mall—indeed, many stores in the mall strive to evoke the aesthetic—but they try to hide their focus-group roots behind a veneer of conscientious sustainability and bohemian style. The music is louder, gathered from Spotify playlists a few lines deeper on the screen than the music playing in American Eagle. The lighting is darker, less fluorescent. There is more wood and black powder coating than steel and plastic. Instead of advertisements, there are murals. Instead of earnestness, there is irony.

The mall elides America’s brutal class divisions by reducing everyone to a shopper. Even workers wander the racks at competing stores on their breaks and eat lunch at the Food Court with the throng. Urban Upcycle pubs lean into the class struggle by rigidly segmenting the market along class lines. The Preservation Pub—a dive bar, not upcycled—attracts impoverished weirdos, for example: artist types, students. Gypsy Ciderhouse attracts the suburban weekenders encamped on the city’s edges, UT football fans, e-mail workers, guys in trucks. Peter Kern Library, a kayfabe speakeasy (Passcode: “1974,”according to Instagram) in a heavily-capitalized downtown hotel, appeals to the city’s ruling sect: tenured professors, attorneys, property developers and their guests from Nashville or Charlotte.

In each of them I found people like Ethan, to be sure. Most of them were working their way through crowded nights in the envelope of silence, but others let their hair down in the shouting din. Two men came into the Preservation Pub, for example, wearing stiff collars and lacy cravats like stout Dutch merchants in paintings by the Old Masters. These two Delft merchants knew everyone, bumming cigarettes from friends at the bar and smoking them in short, shallow, aggressive drags. Amid the din, somehow, they held a paranoid conversation about someone’s grad-school boyfriend.

“How are y’all tonight?!” I shout-asked from my seat at the bar, anxious to find out if they had come from a Renaissance fair or something.

“A hell of a lot better now,” the taller Dutch merchant said, loosening his cravat and looking past me to the other end of the bar. “We just got off work.” He turned back to the conversation and the pair moved to a table before I could say anything else.

In these places you can hear America’s byzantine class arrangement revealing itself in unexpected ways. At Barrelhouse, Survivor’s “Eye of the Tiger” or any of the songs you know by Journey ran their tired course over the loudspeakers for the customers at the bar, but on the way past the kitchen to the bathroom I heard Cattle Decapitation on one trip and 2 Chainz on another. At Peter Kern Library, the host offered us “your choice of gin” but made us promise to keep our voices down. A fraying copy of Emerson’s essays and speeches sits on the bookshelf next to us. I picked up the book and opened, randomly, to the Divinity School Address.

“Through the transparent darkness,” I read, “the stars pour their almost spiritual rays.”

At the next table, a man said that drinking $2,500 bottles of Pappy Van Winkle bourbon “made him a better drinker.”

Speaking of the stars, Emerson continued: “Man under them seems a young child, and his huge globe a toy.”

“The cool night bathes the world as with a river,” he intoned, “and prepares his eyes again for the crimson dawn.”

Across the dark and narrow aisle from the Pappy Van Winkle drinker, a family reminisced about the good old days: Sunday afternoons when men from Knoxville companies would knock on the front door of their parents’ house, over by Johnson City, and offer to pay fifty or a hundred dollars to dump industrial waste out on the back side of the property. “I think that’s how they put Cindy through college,” a man in a polo shirt and blue jeans exclaimed. They all laughed, quietly.

10.

So much for coffee, malls, and breweries. Now that life was returning to normal after the holiday, it was time for the Main Event, the Big Enchilada: Pigeon Forge. You might call the place Gatlinburg, mistaking it for the smaller town up the road, or you might just know it as Dollywood. Frankly, it doesn’t really matter. These places have stitched themselves into one of the most enduring tourist attractions in the United States. If you’re not from Florida, can you tell the difference between Orlando and Winter Park? Do you care?

If you’ve been to International Drive in Orlando, you’ve been to Pigeon Forge. You’ll find the same Japanese steakhouses, the same upside-down Wonder Works, the same go-kart race tracks, the same dinner theaters—equally gaudy, but redneck-themed instead of vaguely historical. You’ll find the same outlet malls, the same hotels, the same traffic. Like International Drive, the road is a valley threading a meandering course through pickpocket mountains of gift shops, ticket booths, and drive-in restaurants, on either side.

Up a little higher, you see condos and cabins huddling among the hardy trees that thrive on the slopes of these ancient, rolling mountains, a billion years old beneath the skein of commerce. The promise of these cabins and mountains is the point of this place. Tourist brochures show the mountains replete in their autumn finery or dusted with winter snow, steam locomotives carrying visitors to the simplicity of the Appalachian past like Harry Potter making his to the enchanted castle on the Hogwarts Express.

Down at eye level, though, the landscape is disenchanted. The owners of these tourist “attractions” have often done little more than throw a glass door on the front of a beige box beneath a sign that says something like “Mountain Air Gifts” or “Appalachian General Store.” Rising above the tinkling bells on the doors, you hear the cars streaming endlessly by on the parkway, gasping and hissing in unison like some sort of carbon-belching dragon. In the autumn, when everyone is here for the turning leaves, the traffic is heavy enough to induce a panic attack. It rumbles then like a giant beast, a whale or an elephant passing signals through the earth, that you can hear in the shops. The air conditioners roar in the summer and the boilers hiss and steam through the winter. Think of the wastewater processing plant somewhere back in the hills, giant wheels spinning through green or beige tanks, filtering the bleach and detergent from all the towels at all the hotels and vacation rentals. Listen to these pieces in unison and you will hear them working toward a goal. Pigeon Forge is a money-printing machine.

We walked around a gift shop spanning three labyrinthine floors of T-Shirts, hot sauces, bird feeders and mailboxes, sweatshirts and jackets, outsider lawn art, lanyards and postcards and keychains, stickers, flags, knives, little spinning toys, Harry Potter-themed Christmas decorations, Aqua Massage beds, a store-within-a-store selling CBD lotions and tinctures, Elvis Presley and Dale Earnhardt memorabilia, backpacks, lighters and knives, and a live black bear. For a few dollars a head, you could step outside and spend a few minutes gawking at the bear on a brown concrete stage with a little pile of brown straw in the corner and a tub of water behind a chain link fence, but the bear was on break when we visited.

A few moments before, when the bear was still on duty, an entire family teetered on the edge of a nervous breakdown arguing about whether the son, a bright-eyed, red-haired ten-year-old, should be allowed to spend his money to see the animal. Dad thought it was a terrible idea, but he had already been in a bad mood when I saw them downstairs by the fish-shaped mailboxes and patriotic bumper stickers. “If that’s what he wants to do, I don’t see a problem with it,” she said, “it’s his money.” That was final. They paid the attendant—also the bear’s keeper—a bearded man with cargo shorts and a green pocket shirt, and filed through the steel-paneled door.

It was seventy-four degrees on the December day that we visited, but a blizzard rolled through the following week. Back in Florida, I imagined the sad little bear stage blanketed with snow. The bear pokes her shaggy head through the chain-link gate and feels a little thrill of joy at the sight of the soft snow. There, at least, was a natural thing to soften the hard edges of this money-printing machine for a little while.

11.

On the final day of our trip, after one last breakfast with my transplanted family, we decided to take the mountain air on a hike at Ijams Nature Center. Originally setup in the 1920s as a bird sanctuary by naturalists H.P. and Alice Ijams, today the Ijams Nature Center sprawls across hundreds of acres of wooded traces, rocky, switchback paths, meadows, and meandering boardwalk across the Ijams’ original 25-acre home site and a couple of neighboring quarries along the banks of the Holston River. It’s the kind of place where early on Saturday mornings you would be likely to find the Subarus-with-stickers crowd, professors and grad students with fancy sandals, colorful activewear, and nice mountain bikes out taking the air. On the week after Christmas, however, the nature-lovers were out of town, visiting their families back in New York and California. The park was crowded, instead, with locals who couldn’t bear to spend another minute staring at their phones on the couch but had already been to the mall twice since opening their gifts.

We parked our Volkswagen-with-stickers among the trucks and SUVs clustered outside of the Visitors’ Center, which was closed for the holiday but surrounded by thoughtfully cultivated green spaces dotted with picnic tables and interpretive signage. These were thronged with running children, smiling running dogs, grandparents, and bored teenagers. Thrilled by the cool mountain air, we chose a promising-looking trail leading up into the trees on the side of a steep hill and set off to see where it would lead.

Just behind the trees, the sounds of the families down at the visitors center and parking lot faded abruptly away. We heard the wind scraping the leaf-bare winter trees, the crunching of gold, red, and brown leaves beneath our feet, an occasional airplane accelerating down the runway or touching down at the small airport on an island opposite the park. The low, insistent hum and percussive crunching sound of a rock conveyor and crusher at a quarry on the other side of the river underlined all else, present but not overwhelming. Though we found ourselves in the heart of the Ijams’ original ornithological preserve, not a single bird spoke in the trees overhead.

Here, I thought, in this gentle melody of river life and industry, laughing families and crushing stone, was the real Knoxville. I could feel the miles on the road slipping away, taking with it the loneliness and anxiety of the malls and Starbucks and Country Inns & Suites of the American suburban hinterland that spread for hundreds and thousands of miles in every direction. Here there was no playlist to put me in a buying way, no TikToks or reels shouting over my thoughts. Here there was only wood and stone, leaves and water.

We went further down the trail, past another open area teeming with families, and then up a demanding set of stone steps carved into a rocky prominence leading to a windswept spot at the top overlooking the river on one side and the small field we had passed on the other side of the trees below. I watched a Golden Retriever chasing a tennis ball, a ginger blur racing across the rich green field below, and I was touched by how vehemently alive we all were, together. I get it, I thought. Here the careworn suburbs and offices were worlds away.

Still, even here you cannot quite escape the forces that shaped those suburbs and offices down the highway. Another trail follows the gentle curves and long straightaways of an old railroad right-of-way down to the rock quarries that fueled the city’s growth before the center of gravity moved the University of Tennessee. Along the way, corporate-sponsored interpretive signs attempt to frame the extensive environmental damage done to this place as a unique part of the landscape. Next to some overgrown ruins, for example, a sign explains that these old chimneys were what remained of a lime kiln that operated on the site after World War II. Sponsored by Dow Chemicals, the sign explains:

“Essentially, they were high-temperature furnaces. Crushed limestone (calcium carbonate) was dropped in at the top and heated up to over 1,800 ℉. The resulting powdered agricultural lime would fall out at the bottom to be shipped away on the railroad.”

Under this explanation, an illustration shows an old pickup truck, painted in the bright red livery of the Dow Chemical Company, backed up to a happy worksite. I wonder how many tons of Tennessee lime were shipped from these kilns to Vietnam and sprinkled over the corpses there while Dow product Agent Orange worked its evil sorcery upon the people and land.

Another Dow-sponsored sign narrates some phases of the area’s environmental history by highlighting the interaction between people and Chimney Swifts. “Historically,” Dow reports, the birds “nested and roosted inside hollow trees. But when the pioneers cleared the land and cut down those old trees, the swifts proved to be highly adaptable. They started using the pioneers’ chimneys.”

The sign does not mention that the Chimney Swift population has dwindled by upwards of 70% since the 1970s. Scientists point to two likely causes of this devastating decline: habitat loss and pesticides. The habitat loss, we learn from the sign, has been going on for centuries. Dow would rather not talk about pesticide.

If you read the signs, you could see this beautiful, quiet day in the woods as the result of a long, mostly positive interaction between humans and nature. Thank goodness we have these woods, the signs suggest, so you can get away from it all and enjoy the majesty of nature. Dow wants you to see the company as an important champion of this majesty. The signs point a bright red finger at chemistry at work in the kilns, science making life better for everyone, resource stewardship doing it all responsibly. Of course they don’t mention the “forever chemicals” in the groundwater. They don’t concede that the place would be better still if the quarries had never been excised from the mountain, the railroads never spiked into place, the kilns never built.

Still, Ijams is beautiful. I stood beneath the “keyhole,” a giant stone portal carved by the old miners who worked this place in the 1920s, and was momentarily overwhelmed by Knoxville. I thought about the old miners’ families, way up back of the mountains, who watched their sons and brothers climb the Pullman steps and take the short ride down the rails to the city below. I thought about the countless young people who have passed through the gates of the University of Tennessee, stayed a moment, and then set out again, carrying little bits of limestone in their pockets to the far corners of the globe. I thought about Emerson’s “Address to the Divinity Students,” quietly resting in its tattered volume on the shelf of the bar downtown, likely never to be read again. I thought about our old home, back in Florida, and I bawled like a child. The past is always with us; it is always gone.

12.

Here is the soundtrack of that moment.

There is deep winter silence. A bird calls from somewhere down in the valley. Across the river, the conveyor belt rasps and rumbles, on and on, tumbling stones which have been buried beneath the earth for hundreds of millions of years, onto a massive pile. Down below the rock mounds, the river flows in its quiet way. It flows past a city that is rapidly turning itself into every other place as quickly as it can. Highways, craft breweries, tourist attractions, chain restaurants, strip malls, and subdivisions colonize the Appalachian landscape, each contributing their own notes of discord to the growing din. You find it hard to hear the silence again.

Despite all this, a few minutes downstream they’re still doing their best to come together and enjoy as many moments of joy and freedom as they can snatch from the scorched-earth vortex raging all around them. Cornbred Worley had it right. Heathens, indeed.