Do you feel that? That creeping sensation that something isn’t quite right?

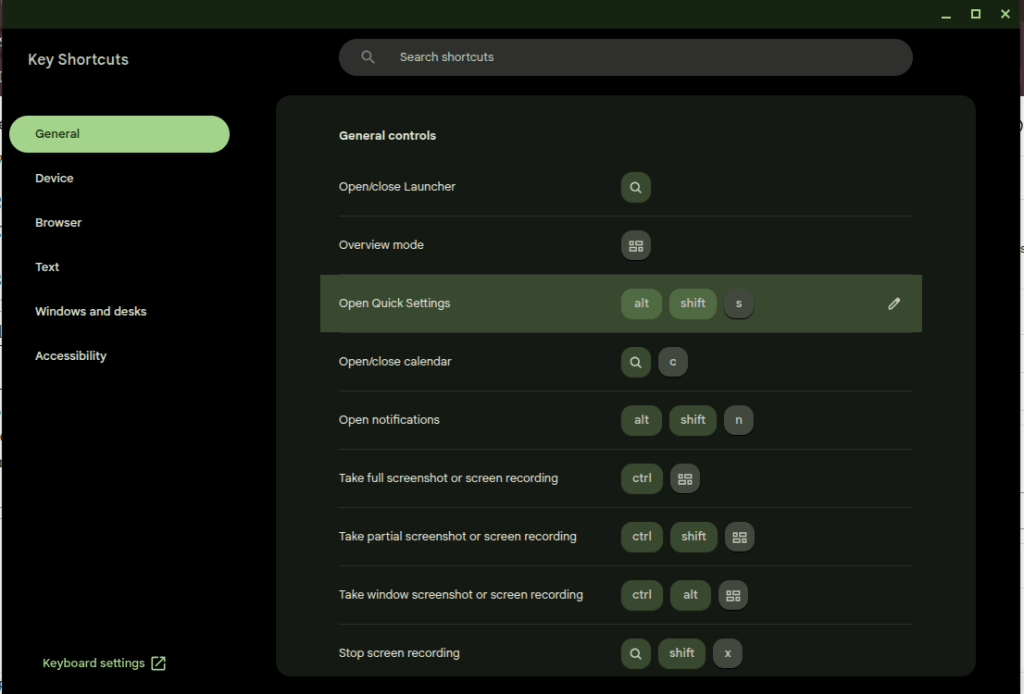

- Maybe it’s when text buffers in multiple Windows apps randomly stop accepting text until you reset the application state – like pressing “Save” or restarting the app. (Maybe, especially, it’s when this happens on multiple computers in different apps on the same day – so you know the issue isn’t limited to one machine.)

- Maybe it’s when Outlook on Android takes you to the last email you received instead of the newest one when you open the notification for the new one.

- Perhaps you feel it when you are reading over some text a colleague wrote and everything is making sense until, out of nowhere, there is a wild inaccuracy on some small but foundational thing.

- Perhaps it is when Evernote opens the wrong note when you click on the one you wish to open, and you have to click twice and wait a moment for the application to sort out with the database what the heck you’re actually looking for.

- If none of these, maybe it’s when you’re looking over an infographic and you see some small thing that doesn’t make sense, like a line that randomly ends before it should, or some garbled text.

These are basic problems—how to handle a text buffer, which record to open when a user clicks on its representation in the UI, whether the company you work is a Gold or Platinum (or Golden?) Business Partner, how to draw a line from Point A to Point B, and so on—which, until recently, have long been solved. Imagine the creeping dread, then, when they all happened to me yesterday.

Thinking about it at the end of the day, writing in my old-fashioned paper journal, I wondered: Did AI do this?

I suspect the answer to this question is yes. I know AI is responsible for the problems with writing and infographics, and I strongly believe it is responsible for the application bugs, but even if it isn’t, walk down this road with me a little ways. I think it leads to an interesting place.

Consider a few signals in the noise. First, AI coding agents are pushing and pulling Github so hard that the service is regularly crashing. Microsoft is working hard to dedicate infrastructure to the new demand, I’m sure; but the demand, for AI users to stress the platform harder than ever before, will remain for as long as the current craze for AI agents persists. Second, every major software vendor is moving toward (or has already arrived at) an AI-first approach to coding. These companies aren’t doing this because they want more code, or at least that’s not the main point. What they really want is fewer people writing the code they plan to ship to customers, so they can deliver the same level of service at reduced cost.

This means that those engineers who remain have fewer colleagues to check their work and fewer incentives to write their own code. It means that the code they have to review was mostly produced by an opaque mathematical algorithm attempting to predict what a human mind might do, given a certain problem, and not a human mind itself. It means that the machine they have to reason with when there is a problem with the code is trained to agree with them and then try the problem all over again. It doesn’t learn anything, it simply chirps a response which is likely to work. And, crucially, the response does work most of the time. It’s a miracle. The machine generates code that works; it’s nearly instantaneous; it passes all the tests; and it made the engineer feel good about what it was doing along the way. No one really needs to understand it, because it is working and I can follow it superficially, and no one needs to own it—I mean in the intellectual sense, to own it the way an author owns their text or a mechanic owns their machine—because they can’t. They didn’t make it. They didn’t twist the wrenches.

Now put it all together. AI is writing more code than humans, it is shipping in major applications that we use everyday, and no one really understands the myriad ways that it interacts with the other code in the application (or the operating system) because there are fewer hands on deck at the major software companies to check it.

A similar problem exists in the vast universe of texts which shape and condition our world. Consider a few examples of how this can wrong in a software implementation project.

- A resource-strapped government agency requests proposals for a new system and uses a combination of AI-generated text and copy-pasted text from the last proposal to create the new one. These may or may not make sense.

- The proposer uses AI to generate their response. It gets most things right, but introduces some strange errors which misrepresent the solution. These are buried deep in the text, and it all sounds plausible, so the human reviewer misses the error.

- The government agency uses AI to summarize the proposals. It may even ask AI to make some recommendations about the best ones. These machines incorporate the errors into their review and summarize or recommend accordingly.

- People on both sides of the deal use Google AI overviews to research their answers to questions that come up during negotiations. These are often right, but the devil is in the details, and the AI overview doesn’t understand the context because it’s a prediction model.

- AI creates the training manuals for the solution. The algorithm cannot generate text from what was actually built, only what is in the training data. A person must bridge the gap. Do they know the difference? Do they have the time, energy, and motivation to care?

I know that software code and the projects that put it in people’s hands have always been full of bugs and hampered by problems like this. The difference, I wish to suggest, is that someone in the past was intellectually accountable for these problems. A person wrote the code; a person wrote the document; a person mastered the material to the best of their ability, thought about it, and then formulated an argument as to how it should be applied to the world.

Software bugs emerge from strange circumstances. A person, while thinking through the problem, has time to consider these circumstances. If they’re writing code they will try and fail, try and fail, again and again and again, until it works. Sometimes they will encounter the circumstance directly. Sometimes they’ll think about it when they get up to use the restroom. The point is, every time they fail, they have to think about the problem; and they have to keep thinking about it until the problem is solved. It takes time, and it takes pain, but when they come out on the other side they have understood it, by God, and they can be accountable for it.

Writing is the same. A writer sits down at the keyboard or the legal pad with a vague idea, like “I think AI is breaking the world,” or “I think the French Revolution was caused by the frustrated bourgeoisie,” or “I need to explain how to use this software,” and then they have to struggle, word by excruciating word, to explain themselves. They have to read and take notes and think; and then like a coder they have to try and fail, again and again, try and fail, to read the right stuff and take the right notes and put words and evidence together in a way that will explain the idea. Along the way they will have thought about it so much that they likely never want to think about it again. But they own it. Ask them about it and they can answer you in detail.

That world is gone.

There is a strong possibility, therefore, that we are standing on the brink of a giant and terrible pit of despair that is likely to break more than just the text box on Microsoft Teams. The problems are going to proliferate, and then they are going to cascade. Systems will get worse. Some of the problems will not be revealed until people die as a result. No one will really know how to correct the bugs because no one will have owned the code in which they were introduced. When an engineer delves into the problem, fixing the bugs will be like playing whack-a-mole, because Function X was written by Claude Code using Opus 4.5 and interacts with Functions Y and Z, which were written by an engineer in 2011, who is now retired, and Claude Code running Opus 4.7, respectively; and all three were included in code that was re-written in a more efficient language by another AI agent last quarter as part of an attempt to gain some memory overhead on the virtual machines running the app. It’s a nightmare, and production can’t simply stop while they figure it all out. The AI agents will be making code changes along the way because the managers have to keep up with their KPIs.

That’s just software. Books, music, art, and movies are another thing. All of them worth the same thought experiment.

Faced with a deteriorating product experience and unhappy customers, companies will face a difficult choice: keep patching the bugs while shipping new features and hoping for the best, rip out the problematic AI-generated code and let humans re-write it, or blow up the code and simply start over.

In any event, I think smart companies by the end of this year will be thinking about the Last Human Milestone. Think of that as the last point when humans wrote all of the changes shipped in the product. For many companies the LHM is now almost five years ago. But even then, in 2021, early users of Github Copilot were still mostly in the pilot seat. The tool could generate some code, but the human had to own the overall solution. For most companies, I think the LHM was probably in late 2025. This is when Claude Code exploded and the rhetoric around AI coding agents shifted from probability to inevitability. Capital follows rhetoric, and we are now, I believe, starting to see the results.

Yesterday it was a creeping sensation, a few momentary glimpses into the void. Tomorrow that creeping sensation may burn like sciatica. The Last Human Milestone will be a starting point for the difficult decisions we all must face. Can we go back?

(Update, the next day: Here is newspaper columnist Dave Barry struggling to convince the Google AI Overview LLM that he is still alive. Notice how it gets more and more wrong with each attempt to solve the problem.

Update, the next, next day: “I’m going back to writing code by hand,” one developer writes, because “AI writes features, not architecture. The longer you let it drive without constraints, the worse the wreckage gets.“)