1.

Outside my window there is a shaft of sunlight streaking across the fence. The fence is dark brown, a color that hasn’t been popular since the 1970s, and the local paint store keeps a formula in the notebook just for our condo association to paint the fences and trim. They call the color “Westwood Brown,” and if we ever decide to change the color scheme here they will probably hesitate to pull the color out of the book because it is like a collector’s item now. It is a story they can tell to new employees. Even so, if you catch it when the light is just right, like right now, Westwood Brown can transport you to a different time–the Halston era, the epoch of the land yacht Coupe de Ville. It is the golden hour and the light points, like a celestial digit, straight at the spreading petals of brilliant flame-colored Bromeliad my wife planted along the fence. I think, I should take a picture of that.

I am unsure if it is me thinking about the picture, or if it is Instagram thinking it for me.

This week I quit Twitter. It’s not like I had much of a presence there, so we will be fine without each other. I had something like 150 followers, followed around 1,200 accounts, and scrolled over there occasionally when the rest of my addictive scroll-holes were drying up for the day. Lately I had been playing a simple game every time I opened the app. If the first post was someone: 1.) wailing about the hypocrisy of the other side in the culture war, 2.) flexing their success, or 3.) piling onto the controversy du jour, I would close the app and move on. I have had very little reason to scroll beyond the first screen in the past few weeks.

I’ve quit Twitter and deleted Facebook before, but I was encouraged to close my Twitter account this time by the spate of articles that popped up this week frankly raising the question: what are we doing here? Quinta Brunson’s thoughts on minding your own damn business afford the best example, but the algorithms must have registered some little chemical twist in my pituitary because I kept running across pieces, like this profile of Twitter-person Yashar Ali, or this interview with someone who quit social media, that chip away at the foundation of social media’s necessity by questioning whether we need it or whether the people we see there are as significant as they appear.

The cynical among you are likely to say that these are dumb questions; that of course social media people are unworthy of our attention, and of course we don’t need it. I think making this claim is a bit like playing a character, though: that of the discerning sage, you probably think, or the intelligent free-thinker standing on a stage opposite the vapid follower and the bankrupt influencer. Which of you is Malvolio and which is Toby Belch will depend upon the attitude of the viewer, however. The postures and costumes are different, but from far enough away the result is the same. They strike us now as just a couple of old assholes, rendered immortally luminous by a poetic genius of world-historical importance. On the internet, there is no poetry to illuminate them. Both characters are constricted by straitjackets of bullshit, and both of them seem terribly unhappy.

That brings me in a roundabout way to the question I sat down to ask in the first place. What am I doing here? I’m pretty sure I’ve asked this question before, but I don’t want to go back and check. That would be depressing, like reading an old poetry notebook or flipping through an old diary from high school. I’ll reframe the question this time instead and try to write my way to an answer. Is what I’m doing here, whatever it is, balancing out some of that unhappiness? Is that even possible?

2.

Lately people have been writing about how much they miss the old internet. The new internet is too vanilla, they argue, too boring and perversely commercial, like a shopping mall. I am normally inclined to agree, but the other afternoon I woke up from a nap and read about alternate reality games and obscure social media characters on Garbage Day. Perhaps it was the residual Triazolam and Nitrous Oxide leftover in my system from oral surgery that morning, but I had trouble making sense of it in the same way that I once stumbled gape-mouthed across arcane forums and exotic communities like a rube from the meandering suburbs of America Online. The old internet, with all of its nonsense, all of its randomness and quirky passion, is still with us. I’m looking at you, Malvolio, for the next line. Of course it is, you might say. It’s all just people.

But did the old internet make people happy? The internet seems always to have been a contested space, a rambling assemblage of insider communities whose best days are very recently gone. I remember my first connection, a dial-up hotline to AOL across endless air-conditioned days and nights in the summer of 2001. I was late to the party, five years behind my more affluent peers, and anxious to make up for it in sheer eagerness. I joined email lists, clicked through webrings, posted to forums. I had been led to believe, probably by bewildered news anchors or breathless magazine articles, that the web was a brand new thing. What I found instead was a bunch of conversations with no beginning and no end. It seemed like the authors of my favorite web pages had all recently stepped away from their keyboards. Communities were cantankerous places, hostile to newcomers, where old fires never seemed to burn themselves out. There was the Eternal September of 1993, for example, named for the time when all the noobs from AOL flooded Usenet and never left. At least that’s how the old Usenet admins have it. For my part, I started moderating a mailing list and stepped right into the middle of flame wars dating back years. Old-timers had to bring me up to speed on the old arguments so I would know how to intervene. New trolls arrived weekly. Maybe it was a bad list, but anyone who’s spent time moderating an online community knows the struggle.

I think of this when I wonder why we all flocked to MySpace, and then Facebook, so rapturously. The old internet was a place made of text, and consequently a place where strangers spread their shit all over you. You couldn’t avoid it. If you wanted to communicate, you had to work at it. You had to learn the jargon, nod along with inside jokes you didn’t always find funny, assimilate opinions you couldn’t publicly examine. MySpace and Facebook were made of pictures, blissfully free of ideas that couldn’t be communicated at a glance. Facebook remains so. Your mere existence, your possession of a face, is the only cost of admission.

3.

Neither the old internet nor the new, then, have made us any happier than we were in the time before. Rather than happiness, what we’ve gained is access to a sort of dark power bubbling in the river of instant and endless information flowing through the pipes. We use this information to construct stories, and it is through these stories that we harness the power to do work in the world. I can’t help but feel as though we’ve designed the internet to give us information which reinforces the worst stories we tell about ourselves. These are stories of growth and progress in which we appear better and smarter than anyone who lived before us. These are also stories that make us feel empowered as individuals, unique among lesser peers. Meanwhile the algorithms conditioning the flow of information enable us, in a vicious feedback loop, to look away from the stories we’d rather not tell–stories of stasis or declension, of similarity and solidarity.

There is another force flowing in those dark waters as well: the emotive power of suggestive blank spaces. Memes are like atoms, rich in potential and eager to freely associate, but information devoid of context is like a shadow divorced from its object. We can only guess at its sources and meanings. This is precisely the type of information the internet is engineered to deliver, however. You ask Google a question and it gives you an answer.

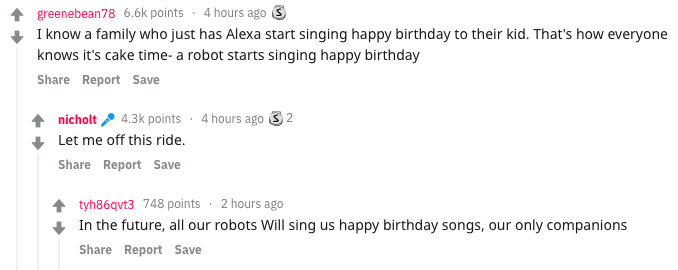

Google’s brand is based on authority and correctness, at least. The rest of the internet is built to keep you engaged. If content exists in a sort of Darwinian state of nature, the most successful information is that which makes you feel. Reddit and the chans are factories producing and serving up the most emotive content. You scroll TikTok or YouTube and the algorithm serves you videos according to their likelihood of keeping you engaged. Twitter and Facebook serve up bite-size nuggets of emotion on the feed. News editors engineer headlines to galvanize you to action. It is not that microblogs, articles, memes, pictures, blurbs, and short videos are incapable of rich contextualization; it is that the creators focused on context are not as successful as those focused on engagement.

Part of what I am trying to do here is counter these tendencies. I am drawn to the stories we prefer not to tell. As a historian, for example, I am fascinated as much by continuity as by change over time. No matter the era in which they lived, informants in the archives shared their similarities with us as readily as they disclosed their differences. Our motivations echo theirs. Many of our creations fall short of theirs. Like strangers on the internet, they rub their shit all over us too. We would do well to wallow in it, though, because we cannot engineer a new world as easily as we can engineer a new user experience. Our culture is built from theirs. We live in the cities and towns they built. We speak their language, worship their gods, read their books. Rather than seeing ourselves as disruptors or innovators, we might benefit from seeing ourselves as cautious trustees of that world, therefore; as careful fiduciaries focused on moving slowly and maintaining things rather than moving fast and breaking them.

This perspective need not be conservative. The narrative of constant growth and improvement is the guiding myth of capitalism, after all. I think building an alternative grounded in context, focused on capturing the prosaic or humanizing the proletarian (to the best of my meager ability, at least), can make us feel a little more anchored in the swift currents of a society built to pick our pockets and power over us by maintaining a constant state of instability. If this approach can make the internet a little bit of a happier place, then maybe I’m doing some good here.

4.

I failed to mention at the beginning of this essay that I am not necessarily a reliable narrator.

I actually don’t know whether I’m achieving any of the lofty goals I just described. Perhaps, like an artist’s statement, everything I said up there illuminates the principles organizing my work. I like the sound of that but I’m not sure it’s true. The truth is that I really just work on ideas that I like without worrying about how they fit into some schema. If I admit this, however, then my work in this essay isn’t done and it remains for me to answer: does doing this make me happy? Now I can’t hide behind a shield of analysis. This just got scary.

Writing for me is a form of exorcism. If I go more than a few days without writing something, anything, I can feel a sort of dark pit forming somewhere inside me. I’ve come to realize that this darkness is death stalking me, as it stalks all of us, from somewhere just outside of my peripheral vision. The longer I go without writing–or, to a lesser extent, creating other things like images or music–the closer it gets, until the feeling of hopelessness is almost unbearable. This sounds like an acute illness, I understand, but each of us is striving to overcome this darkness in our own way every moment we are alive. Some of us achieve it through devotion to family. Others achieve it through friends or work, some achieve it with drugs. Writing is what works best for me.

Every word written is written for an audience. This is obviously true for articles and essays like this one, for books, and so on, but even a journal is just a book we write for our future selves. With this in mind, a few years ago I thought: if I’m writing simply to stay alive, why not publish it? It takes so many rejection letters to get an acceptance, and I don’t have time (this line of thinking goes) to develop a whole submission and tracking process in addition to working, studying, trying to shift gears and be creative, and then somehow writing and finishing some hairbrained idea in the first place.

The narrative of progress through technology is here to make me feel good about this. With the rise of Substack and the constant firehose of essays like “No One Will Read Your Book” or “10 Awful Truths About Publishing” or this NY Times article which found that 98% of books released last year sold fewer than 5,000 copies, there is no better time than now to rethink publishing altogether. There are currently 7,614 markets and agents listed on duotrope. Many of the “lit mags” on the web and indexed by duotrope are labors of love undertaken by one or two individuals. They went out and bought a domain and built a WordPress site, just like I’ve done here. What’s the difference?

Ask anyone who writes and they will point out the problem right away. There are few things more pathetic than a self-publisher. Publishing my own work here helps to exorcise that darkness, but it will never feel good enough. The point of publication, especially now that we all have the resources to publish whatever we want–is that someone else thinks what you have to say is worth amplifying. Publishing your own work is like an admission of inadequacy. It feels like saying, I don’t think this is good enough to publish or, even worse, nobody else thinks this is good enough to publish.

This is an indoctrinated opinion. Just like those stories of endless progress that troubled me a few hundred words ago, this opinion serves a social purpose. It is likely that how you see that purpose depends on your bedrock identity. Perhaps you see publication as a form of competition that elevates the best writing over the mediocre, driving everyone to greater heights of achievement along the way. Or maybe you see it as a limiting mechanism that functions–either intentionally or not–to push out voices that challenge dominant opinion.

Since I am now in the personal and supposedly truthful part of this essay, I should say that my own view changes based on how strongly I feel about my own abilities. When I am down, probably after a rejection or two, my opinion of publishing is decidedly Jacobin: down with the editors! At other times, probably when I’ve had something published or just written something that I feel good about, I’m as sanguine about the market as Adam Smith.

Perhaps all of my opinions are like this and the problem I am struggling to write around is that we are supposed to be consistent. Do the algorithms know I am as changeable as the wind? Do they take advantage of the distance between my variable opinions and desires and how consistent I think they are? We value being but we are all, always, merely becoming.

This is the real strength of a blog. A book is firm, stolid like our opinions are supposed to be. A book, we might say, is being. The internet is fluid, as variable as the flood of emotions and opinions shaping our daily experience. It is a space characterized by constant becoming, by revising, rethinking. Conversations here neither begin nor end. We’re all just passing by one another, sharing ideas inscribed with light and stored as magnetic charges on a magnetic array somewhere far away.

This website is my bridge between becoming and being.

This website is a bridge between the old web and the new.

This website won’t make anyone happier, but it’s not for lack of trying.